Three. BIG. Things. 3/1

If you missed it, we got it. An art, tech, whatever newsletter.

Don’t miss an edition, hit the ☝️☝️ subscribe button ☝️☝️ right up here. And catch up on every edition that passed you by.

This Week’s Three BIG Things:

Citrini Research Imagines a Dying Economy

Is Anthropic Really our Last Line of Defense?

The Metaverse’s Loudest Proponent Gives Up on the Metaverse

Okay, Let’s Get On With It

1. Short Fiction is so Fucking Back

A hearty “How you like them apples?” to everyone who’s ever declared fiction or the written word dead. Turns out, with a little bit of research and a solid premise, even a bite-sized 7000-word short story can still change the world, if by “change the world” you mean throw the American stock market into a momentary (for now) downward spiral.

We talked about this at-length on Thursday’s MOCA Town Hall, but I find myself unable to keep from discussing this Sunday essay from Citrini Research. It’s author are top Substack financial writers “James Van Geelen, chief executive of Citrini Research, and Alap Shah, a Florida-based AI entrepreneur and investor,” and the title more than deserves it’s all-caps, bold-faced original stylization: “THE 2028 GLOBAL INTELLIGENCE CRISIS.” Citrini Research itself is a market research firm dedicated to investigating “the transformative ‘megatrends’ poised to shape the market’s distribution of returns for years to come,” according to their official website. Essentially, they conduct thematic market research and then design investment products using that research as a base. You’d be forgiven for never having heard of them, at least until Sunday, when they became the de facto topic du jour of The New York Times, Bloomberg, YahooFinance, The Financial Times, The Wall Street Journal, Fortune, Barrons, Vox, Reuters and others; the list of major publications with Citrini in their purview goes on and on.

And all because of a short story! Really! “THE 2028 GLOBAL INTELLIGENCE CRISIS,” is a step-by-step prognostication of how AI technology may slowly destabilize the entire American economy over the course of two years. At the very outset of the story, Geelen and Shah write, “What follows is a scenario, not a prediction.” Thus, as the authors detail the beginnings of AI market disruption, the proliferation of AI agents, the widening of impact outwards from specific sectors to the entire economy, the effects on individual (now oft-unemployed) workers, the unwinding of long-term market interdependencies, and the too-slow adaptation of culture and government, there remains a kind of winking-at-the-audience understanding that “Yes, this is plenty plausible, but it’s still crazy.”

“Plenty plausible” was as far as many very intelligent people got.

As of this sentence’s writing, the initial Citrini tweet has 10mm impressions, 21,000 bookmarks, 14,000 likes, almost 4,000 retweets, and just under 900 comments. But even those numbers don’t capture how seismic an effect the story had on broader markets, specifically tech stocks. In his eponymous newsletter, writer Derek Thompson says:

“Many of the companies name-checked in the essay saw their stocks plunge. The Wall Street Journal reviewed the day’s carnage under the headline ‘Viral Doomsday Report Lays Bare Wall Street’s Deep Anxiety About AI Future.’ Some details:

Software firms Datadog, CrowdStrike and Zscaler each plunged more than 9%. International Business Machines’ 13% decline was its worst one-day performance since 2000. American Express, KKR and Blackstone—all name-checked by Citrini—tumbled…

Shares in DoorDash also veered 6.6% lower Monday after Citrini’s Substack note called the delivery app a ‘poster child’ for how new tools would upend companies…”

I often turn to Thompson as my tech/market guru, so I find his newsletter on the topic, “Nobody Knows Anything,” an instructive jumping-off point for this story. He says, “If I had to pick three words to summarize this collective expert view of the future, I could not in a million years, or with a trillion tokens, find three words more suitable than these: Nobody knows anything…What I mean is that the frontier labs don’t really know what they’re building exactly2, and economists don’t know how to model the thing that they claim they’re building. As a result, nobody really knows what is going to happen with AI this year, or next year, or the year after.”

It’s a compelling and salient, if frightening idea. No wonder a very well-written story about AI’s market effect would be so destabilizing to the markets they discuss. The economy is already balancing on a wobbly three-legged stool. The “three-legged stool” is a narrative tactic I’ve gleaned from writer’s workshops: a fictional situation or world or relationship must be fundamentally off-kilter in order to be compelling; the wobble is the conflict. And so Citrini drops this short story into a world that, within a week, would see Jack Dorsey’s financial services company, Block (parent of Square and CashApp), laying off half of its workforce “driven, at least officially, by AI,” according to TechCrunch. Uncertainty has long been palpable. On top of that, Citrini’s story is extensively well-researched. I’m by no means an economist or financial researcher or trader, but I’m not alien to market terminology. Even still, Citrini’s story often veers away from common English and into a class of acronyms, idioms, and concepts rarely-spoken outside of Wall Street. Citrini spoke to the market in the market’s own language. Imagine you’re lost in a foreign city at night, Istanbul, let’s say, and someone walks out of an alley, bumps into you with their shoulder, and in perfectly-accented English says, “Something very bad may happen if you continue down this road.” If you are anything like me, you’d probably feel your legs get woozy. And then you’d turn right around.

The economy cannot turn itself around, but it can certainly get woozy. And every time it does, the collective financial media responds in one of a few ways:

Theory-crafting a la Thompson and The Financial Times

Vociferous counter-arguments that seek to squash the spooky thing, as seen in by Bloomberg, SeekingAlpha, and this Substack from Noah Smith.

New information used to justify past predictions, as we’re seeing currently with the aforementioned Block layoffs, which Barrons and BusinessInsider specifically linked to Citrini’s story.

I imagine we’ll oft be seeing “Citrini” referenced casually in market reports. They might even have their name affixed to a kind of AI sell-off going forward. A term like “the Citrini Effect” or “a Citrini cycle” could come into vogue. But as I watch all of this going on, with less idea than many of these other writers about what’s going on, I’m reminded of that early NFT boom period in 2021.

One of the most frequent disagreements during that time was whether the market followed narratives or whether narratives followed the market. There was such uncertainty about where value should gather that a convincing narrative was enough to convince investors to purchase this over that NFT project. Every speculator wanted to understand and thus beat the trend, because that’s where the money was, getting in just before the 10x or 100x. This has been played out again and again in crypto, often applicable to art (in a way), and most recently in the memecoin mania of late 2024. Any massive price spike for a project was deemed, in hindsight, predictable. Look at their community! They’re making a game? Pranksy is invested? Every piece of information was some sign overlooked to justify price. Narrative followed the market. But then you had project founders like Frank from DeGods, for example, who learned to manipulate hype. Announcing announcements. Secret whitelists. The promise of something big coming soon, which was narrative-enough to justify investment. It’s why every PFP followed the lead of Bored Ape Yacht Club and released a road map. If they didn’t, their holders demanded one. Therein, one could see when future value could accrue, we could anticipate hype, and the market could erupt accordingly. That was much more juvenile and, in a way, more primal than what’s going on with AI, but I think there are similarities. Here’s one: Citrini Research was a name known by few on Saturday, and after affecting the market, is now lodged in everyone’s mouths.

The AI market, like that NFT bubble, is a very well-financed yet very dark pit. Maybe more like a tunnel system. How do we get out? Where does it lead? That’s anybody’s guess. Hence Thompson’s assertion that “Nobody knows anything” (originally a quote from Hollywood screenwriting master/guru, William Goldman, to describe the movie-making process). Nobody knows the right way through, only that these tunnels go on for a long long way. But hype in AI is now provably close to being hackable itself. Citrini Research has published a playbook on how to achieve a widely-distributed market effect. You need a preexisting following, you need meticulous research, and you need a solid hook. That won’t be widely possible, but it will be accessible. And all that needs to happen thereafter is for market effects to confirm a theory/story/essay/prediction for tethers of value to form. From now on, Citrini Research is going to have a hand in moving markets. They will be looked to as torchbearers because they were “right” once, even if being “right” only meant the narrative justified itself after the fact. It’s possible that their every bold prediction henceforth plays-out in the market one-to-one, now that they are being seen as gurus. And so markets will follow narrative again. And it will go like this, just as it did with NFTs, market and narrative following one another here, reversing course there. Every sufficiently unstable market will cede to that same effect. The stool only has a few ways in which to wobble, but it’s impossible to predict which way it will wobble next. This week, it was a dystopian short-story. Next, it may be a tweet, the announcement of a joint task force, a round of layoffs, an acquisition, who knows. All the stool needs is a little push.

But nothing in NFTs played out as we thought. When I was writing microfiction for a Solana PFP called Galactic Geckos Space Garage (part-time, paid pretty well though), the agreed-upon strength of their lore (not to toot my own horn) was treated confirmation of their eternal importance. Until it wasn’t. When a little-purchased project called Taiyo Robotics was suddenly snapped-up by the founder of a marketplace called SolPort, it spent the following few years as one of the highest-priced NFT assets on Solana. They crashed. They revived! They crashed again! They bounced! By that point, no narrative was strong enough to stick long because the market had stopped trailing after narrative. The collective belief in narrative’s importance gave the narratives market power. That alone.

My fear (if you can call it a fear) is not that these AI narratives will be self-fulfilling prophecies. They certainly will, at least for a time, at least at times. My fear is that people will believe this nascent market predictable, and they will forget that the stool remains wobbling, that its logic system, even if for a long time consistent, can suddenly shift to a new leg. That destabilization will be much worse because it will somehow be seen as a surprise. It’s not just that nobody knows anything. This week proves that. Much more important is that we never believe for even a moment that anyone really does. That misplaced confidence has laid many low before. The tunnels just go on and on and on. The stool does not magically grow a fourth leg.

2. Anthropic vs. The Pentagon

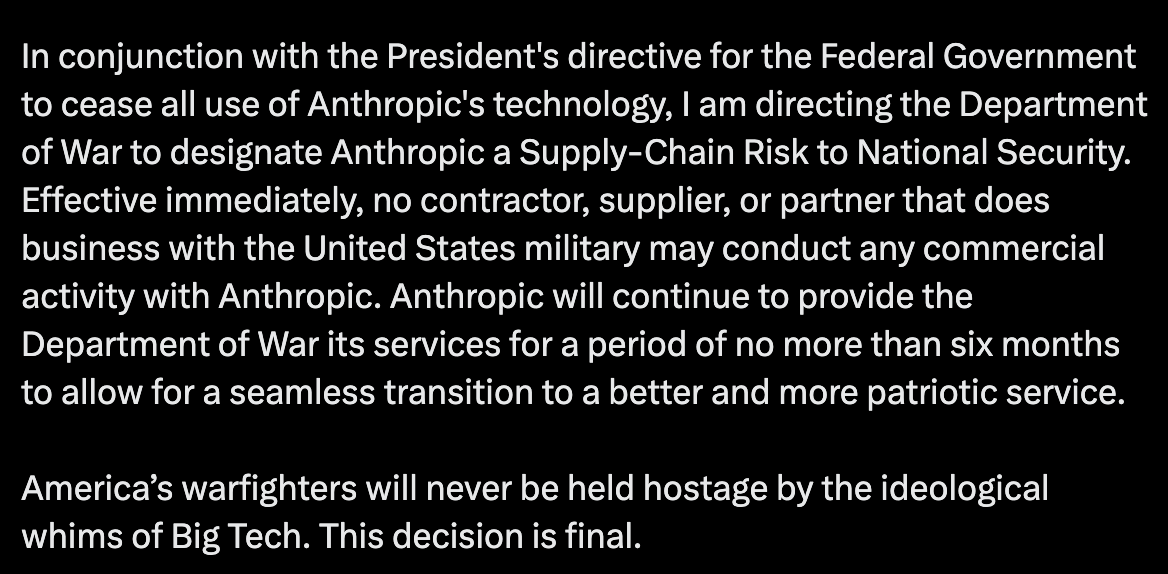

Not all dick-swinging contests are as material as the one currently going on between Anthropic, the frontier AI lab responsible for Claude, and the United States Department of War (formerly of Defense), headed by Secretary of War (formerly of Defense) Pete Hegseth. Sorry for all the commas. As reported by Hadas Gold and Haley Britzky at CNN a few days ago, “Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei a Friday deadline to comply with demands to peel back safeguards on its AI model or risk losing a Pentagon contract. He also threatened to put the AI company on what could amount to a government blacklist.”

CNN notes that, “At issue is the guardrails Anthropic placed on its AI model Claude. The Pentagon, which has a $200 million contract with Anthropic, wants the company to lift its restrictions for the military to be able to use the model for ‘all lawful use.’” Of course, the body that makes laws has a vested interest in widening the meaning of “lawful use,” but we’ve spoken enough a lot about misaligned incentives these past few weeks, and I trust you can fill in the blanks.

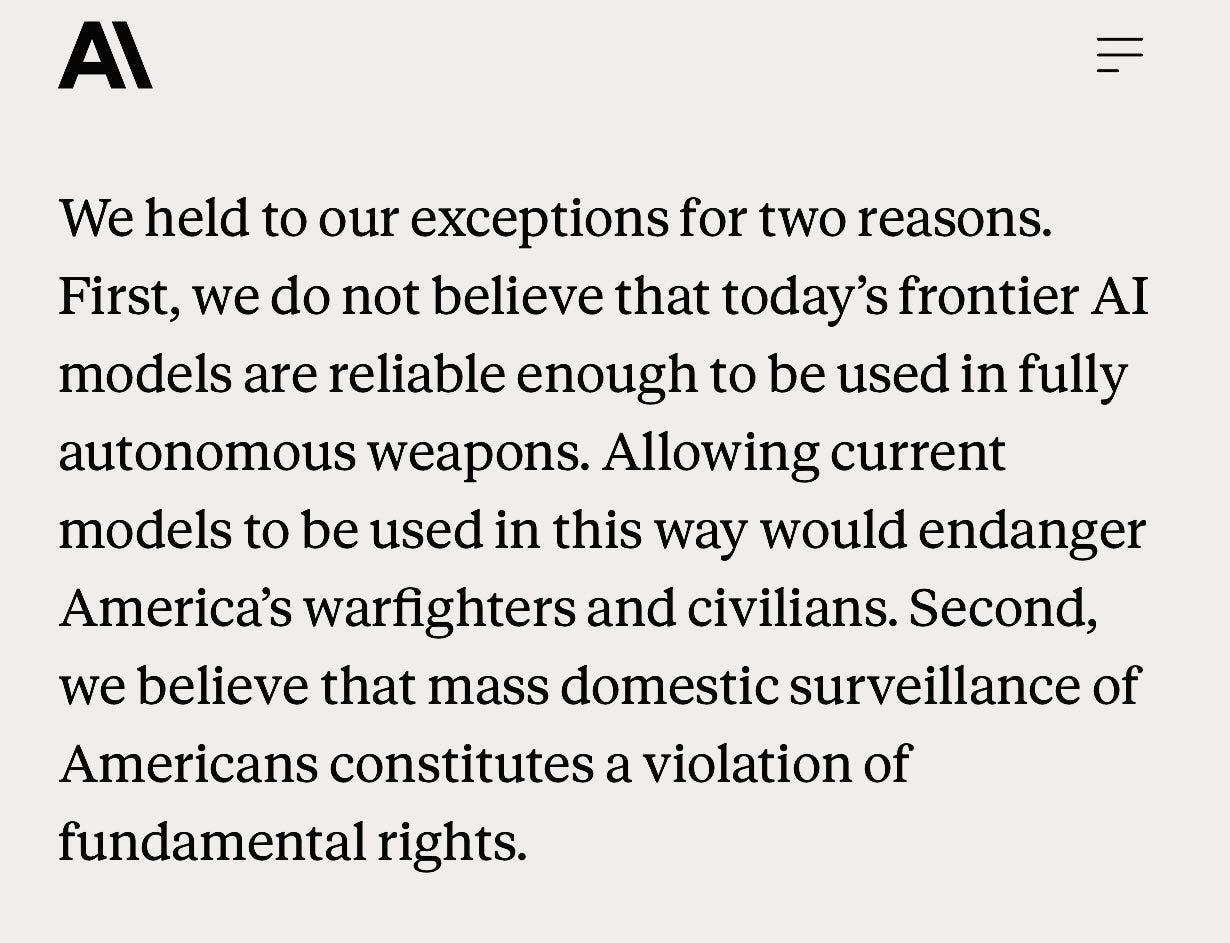

As per David Lawler and Maria Curi at Axios, “Anthropic’s hardline approach… disallows their ‘model to be used for the mass surveillance of Americans or the development of weapons that fire without human involvement.’” Those are specific promises, but they encompass most of the AI fears felt by the wider American public (if the Ring fiasco was any indicator). Few were certain how this saga would play out, if Anthropic would really sever a tasty, $200-million government contract for the sake of professed morals. And yet, the deadline passed and Anthropic had not wavered in their commitment, instead putting out the following statement:

Soon after, the Defense/War Secretary responded in kind:

A strong condemnation, even though behind the scenes, “The Pentagon says it wants to continue talks with Anthropic after they formally refused the Department of War’s demands,” according to Polymarket (Hegseth’s comments are more recent than Polymarket’s report).

In the aftermath of Anthropic’s refusal to back down from the Pentagon’s demands, other AI companies have at least somewhat appeared to follow-suit. Though a community note on the following post says (in full) “Government officials have contradicted Sam’s claim, saying OpenAI will allow the DoW to use their models for ‘all lawful purposes.’ This could allow for uses Anthropic refused to engage in, namely mass surveillance tools and weapons systems with no human oversight,” OpenAI CEO Sam Altman nevertheless announced that the company had reached their own agreement with the Department of War/Defense, replacing Anthropic outright. Altman says:

“AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems.”

That’s about as clear a response to the aforementioned fears as he could have written, so it’s interesting to me that there would be uncertainty around his claims, given he did not seemingly talk around them at all. Some people on Twitter point out subtle differences in the language used in this new OpenAI agreement, though I have to imagine a consumer-public/market made foolish by fine print would respond angrily indeed. OpenAI’s footing is already shaky given the insipid response to its new ad-supported business model.

I’m not surprised, given the petty squabble we talked about last week, that OpenAI would immediately step into a void Anthropic left open, though it seems as if Anthropic set themselves up as a potential sacrificial lamb for the industry, demonstrating a hard-line approach with the most powerful government in the world and, in doing so, setting themselves fundamentally apart from any other less-principled company. Any other company would appear less-principled by nature. No wonder images like this began to propagate throughout Twitter:

That photo is AI, by the way.

In many ways, the ultimate fight for supremacy in AI is a fight for public preference. Look at Apple, whose staunch approach to protecting data privacy has placed them in a singular tier of trust away from other titanic tech companies with ruined reputations like Meta or Alphabet. Winning the war for public opinion is a matter of trust and capitulation. The bearish AI despisers will all fall in with the technology eventually; as it becomes more and more widespread they will have no choice. To which company will their principles take them? To a data-exploitative, ad-heavy, warfare agnostic company like OpenAI? Or something equally as useful with demonstrated principles? My gut feeling is that Anthropic sees a world in which their product capabilities will remain essentially on-par with OpenAI, and even if an organization like the Pentagon never comes back, they will make up for that deficit in what has amounted to a sterling publicity stunt. OpenAI and Altman coming-ff even more villainous in public perception. Dario Amodei getting beatified on Twitter. I have to say, Anthropic’s choice has made more of an Amodei believer out of even this cynical writer. How many dominos have already fallen in Anthropic’s direction, after losing-out on what is about the general market budget for a Marvel film?

3. Even I Missed This: Meta Pulls Out of the Metaverse

When I first started writing this newsletter a few years ago, I would always start with some simple Google searches. For AI, obviously. And NFT, which even in 2023 and 2024 yielded a few notable results each week. Crypto art, which was less predictable, but it wasn’t impossible to find something. And, of course, given the zeitgeist of the time, I would search Metaverse and be met with all kinds of fascinating developments, uses of VR, curious investments with the potential to do all sorts of things. The Apple VisionPro released in February of 2024, only two short years ago, at a time when the Metaverse may have been conceptually struggling, yes, but it had not yet been deemed officially dead. Well, it may have happened a month ago, but I apparently missed the Metaverse’s formal (at least macroeconomic) funeral.

According to Sarah Perez at TechCrunch last month, “Meta’s enormous bet on virtual reality ended last week, with the company reportedly laying off roughly 1,500 employees from its Reality Labs division — about 10% of the unit’s staff — and shutting down several VR game studios, according to The Wall Street Journal. It’s a huge reversal for a company that, just four years ago, staked its entire identity on the concept.”

I remember Meta’s first official commercials, after it unveiled its new corporate moniker. In the ad, titled The Tiger and the Buffalo, kids at an art museum are literally immersed into a jungle painting that comes to life around them, the titular Tiger welcoming them inside by saying, “This is dimension of imagination.” There was a lot of dancing, a lot of anthropomorphic animals, a general atmosphere of celebration.

And here we are, the party ending with a whimper so weak that even I, a metaverse enthusiast and writer, did not hear it.

TechCrunch continues, saying “Meta’s vision at the time was that the metaverse would be the next big social platform, where users connected in a virtual world via Meta’s Horizon Worlds app and played games on their VR headsets. Fast-forward, and the metaverse has effectively been abandoned in favor of AI.” They list a staggering number of metaverse programs and apps now forsaken in Meta’s more-recent bid to scale-up its AI arm. No company, regardless the size, can apparently beat back the ceaseless reptilian urge to pivot into AI.

The Metaverse will be remembered by most, if at all, as little more than a marketing buzzword. And a buzzword that never meant much, at that. It was extremely difficult to describe what “the metaverse” even was. Virtual world apps like Hyperfy and Somnium Space utilized VR and allowed for interconnected spatial experiences, yet they never grew into anything approaching the navigable digital ecosystem many Metaverse proponents saw as the true evocation of the term. It was always confusing conceptually. It required an insane amount of consumer buy-in on hardware (even Apple has struggled to find a raison d’eitre for its VisionPro headsets). And the way it was sold to the public —often through demonstrations of virtual workplaces— was lame and uninspiring. As for an epitaph, TechCrunch writes, “Besides being overhyped by investors and analysts alike, initial versions of the metaverse were just bad products. The goofy, soulless avatars didn’t even have legs, and one metaverse selfie of Meta CEO Mark Zuckerberg was so bad it even became a viral meme. In short, Meta was overpromising a future while its product still under-delivered.” Let us not forget that the above breakdown focuses on Meta itself, especially its flagship —and barely-used— metaverse product, Horizon Worlds. Unfortunately, by branding itself “Meta” and spending billions on marketing, that company lodged itself in the minds of many as analogous to the metaverse itself. When Meta died, so did the concept it carried.

Many of us here know that the metaverse’s bones remain intact. Virtual world apps continue to exist. Hyperfy is still in wide-use by developers and innovators. Long-time life-simulations like Second Life continue to boast devoted userbases. But the term “metaverse,” itself borrowed from Neil Stephenson’s 1992 novel, Snowcrash, is likely no more. Too much negative association. Made into a laughing stock. When I say “the metaverse is now officially dead,” I do not mean its platforms and parts and dedicated users, its nation of architects either, I mean simply the terminology. Next time, the name itself should be too much of a mouthful for one tech misstep to swallow whole and call its own.

DeCC0 of the Week

This week’s DeCC0 is none other than #4988, who otherwise goes by Akilchi:

Art in the Wild

Quote of the Week

“With color one obtains an energy that seems to stem from witchcraft.”

Do you have some news that simply must be shared? Send us a DM