Three. BIG. Things. 2/15

If you missed it, we got it. An art, tech, whatever newsletter.

Don’t miss an edition, hit the ☝️☝️ subscribe button ☝️☝️ right up here. And catch up on every edition that passed you by.

This Week’s Three BIG Things:

Blackdove is REALLY Blowing This Whole “Being a Company” Thing

The AI Exodus Continues, Lest You were Unaware

The Doorbell Company that Became a Dog Whistle (no pun intended)

Okay, Let’s Get On With It

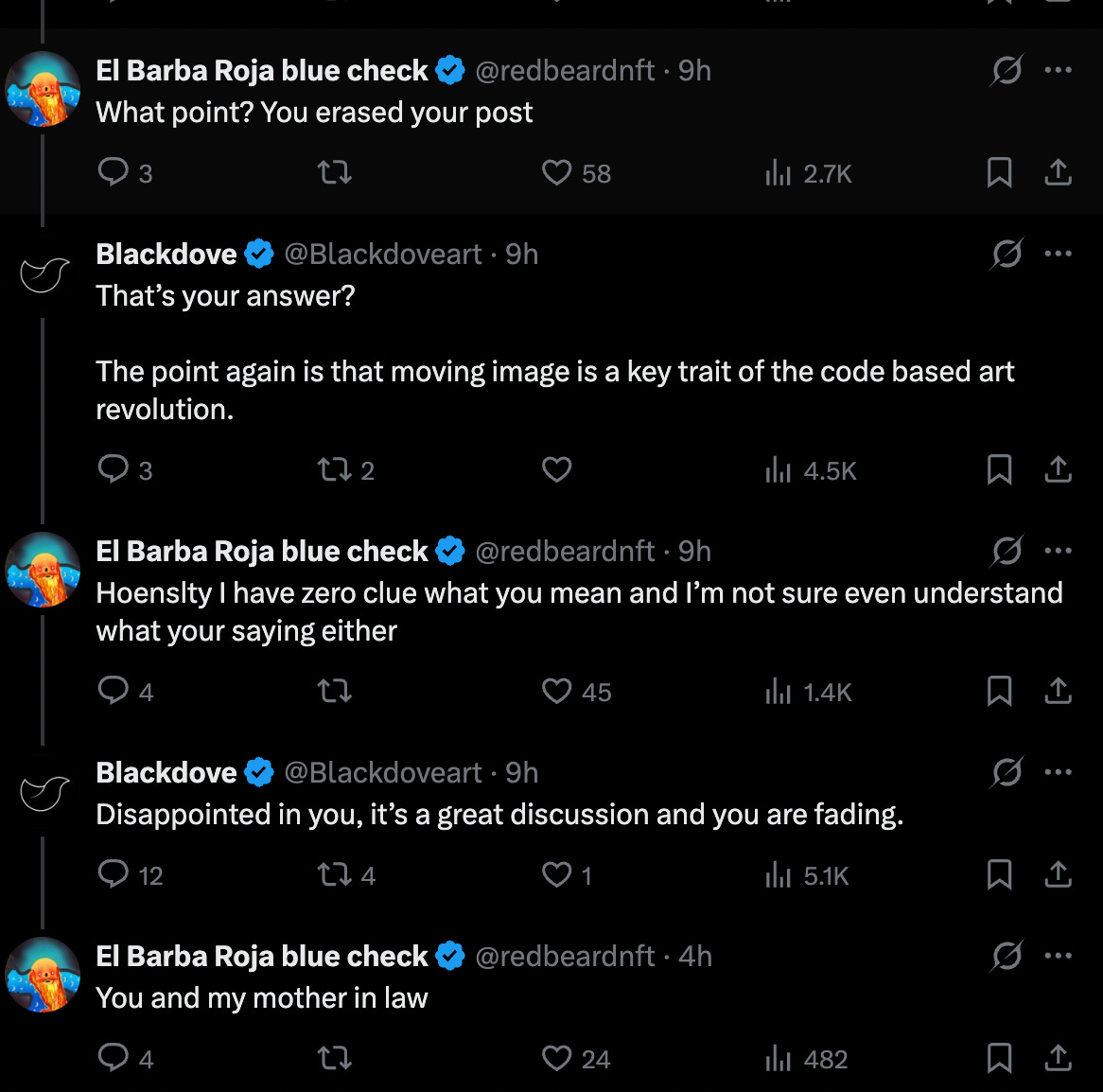

1. Blackdove Can’t Stop Orienting Itself as the Enemy

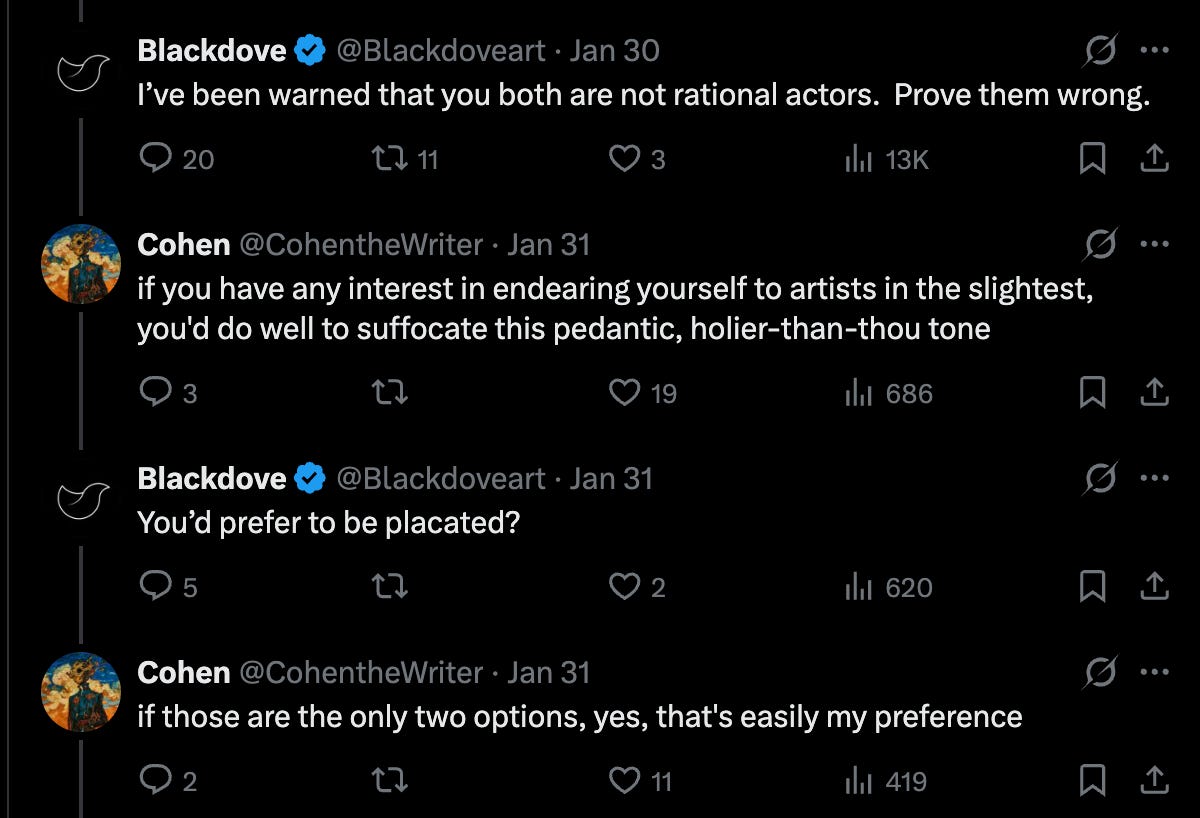

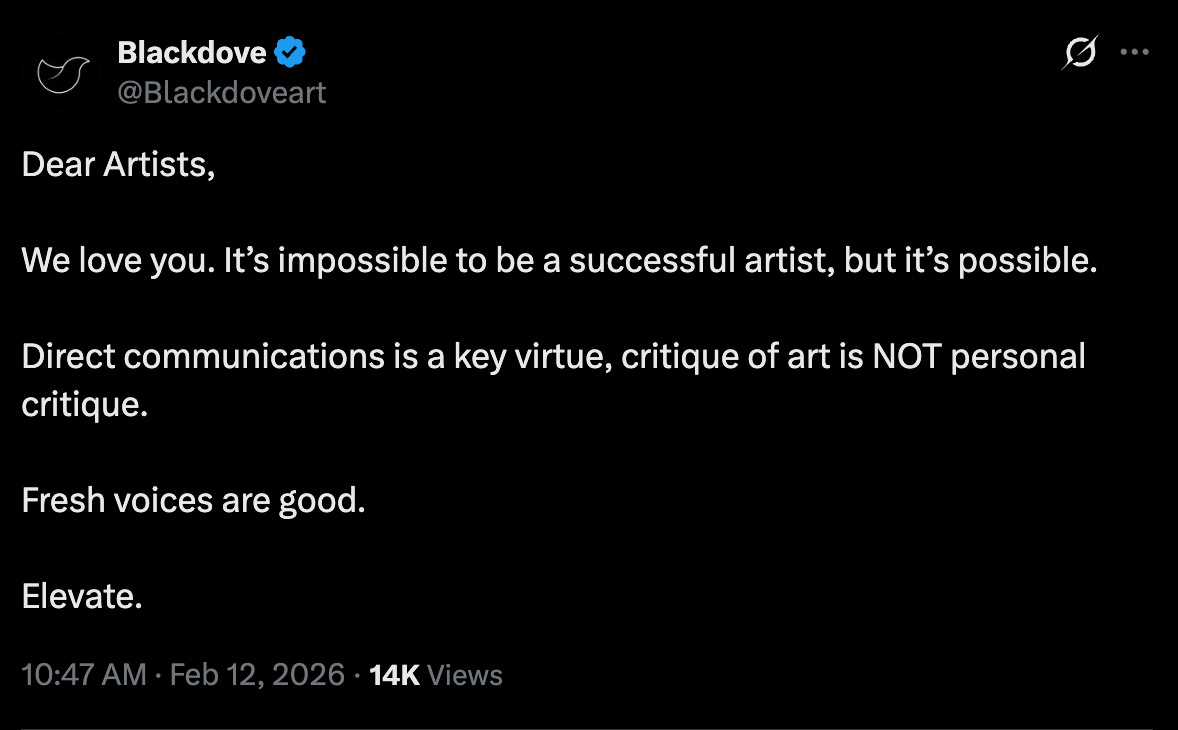

I’ll admit, this doesn’t really count as “news,” but something very strange and honestly fascinating is afoot. I became aware of it maybe a touch earlier than others, after an interaction about two weeks ago between myself, Daïm, Max Osiris, and the guest of honor in this column, whoever the fuck is running the Blackdove Twitter page. Here’s a taste of what that original interaction included:

First we have the Blackdove social media manager hopping untagged into a post to call both Daïm and Max Osiris “not rational actors,” then treat all three of us pedantically.

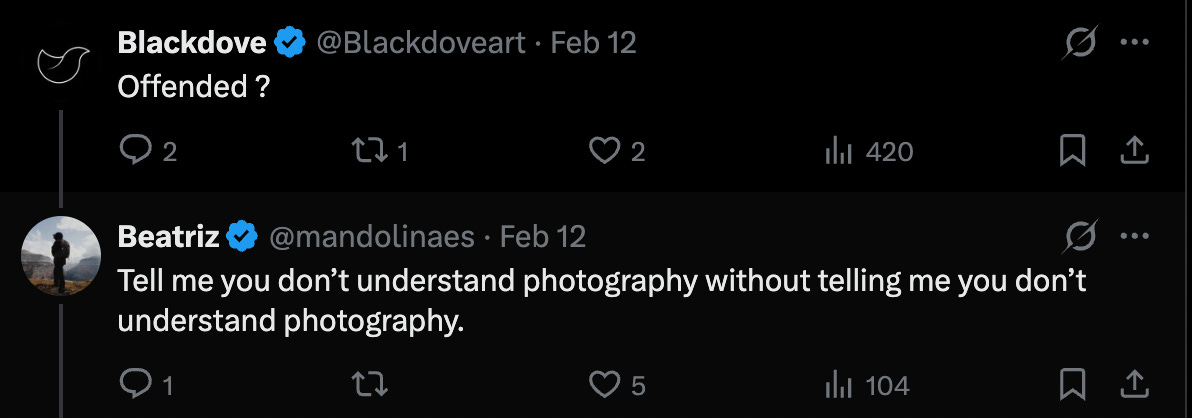

Which was followed quickly by misspelling the name of curator (and agreed-upon royalty), Eleonora Brizi:

That interaction alone had Daïm and I off in a separate chat asking each other whether the Blackdove Twitter was being run by some AI agent; no human being could write with such stilted diction, such undeserved panache, so little emotional stability. Only a few days before that all happened, Blackdove had announced its acquisition of Foundation, which had been one of the few remaining independent crypto art platforms. One would think that Blackdove —which describes itself as “a digital art platform that allows you to stream, collect and display artworks from leading digital artists across the globe”— would be doing its absolute best to ingratiate itself with the huge host of crypto artists it sought closeness to with its purchase of Foundation. At the very outset of their website’s About page, Marc Billings, Blackdove’s Founder/CEO, writes “With Blackdove, artists can now sell their work in limited editions backed by NFT, license their artworks via in-app purchasing, and earn royalties on subscriptions.” Appealing to artists is the crucial base-layer of Blackdove’s entire platform: artists provide the raw material that Blackdove then promotes, packages, and licenses to those who purchase its software (they sell digital screens, I think, and have an app where you can license artworks to show on those screens). A reasonable expectation would be that Blackdove would be using their resources to strengthen relationships with artists by any means, widen their reach into the ecosystem they just spent a pretty penny to penetrate into, and onboard anyone who may be financially useful to them going forward.

And yet, over the last few days, the tact taken by Blackdove’s social media arm has been downright bizarre. In the span of a half-week, they’ve turned themselves into a uniform laughingstock, literally bringing all of crypto art, high and low, artist and collector, critic and shitposter, together in scorn. Imagine writing a post so bad it entices even an eternally-optimistic-to-a-fault collector like Benny Redbeard to talk shit.

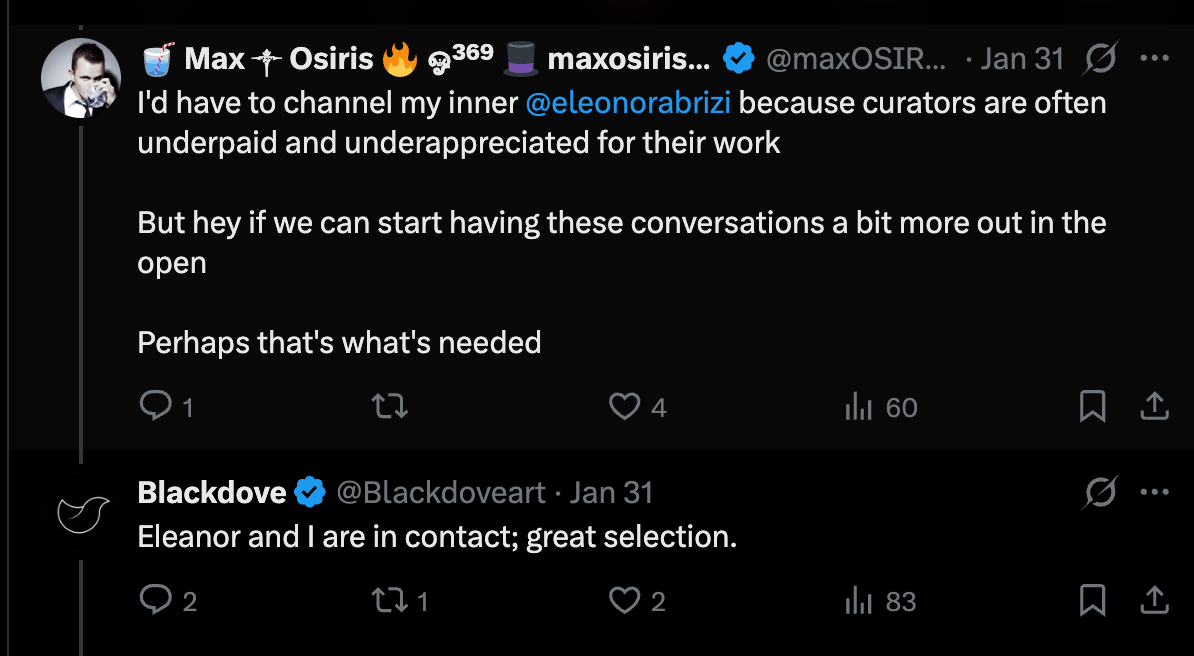

Imagine, not! Here’s the interaction which led to Blackdove becoming the crypto art jester du jour on Wednesday.

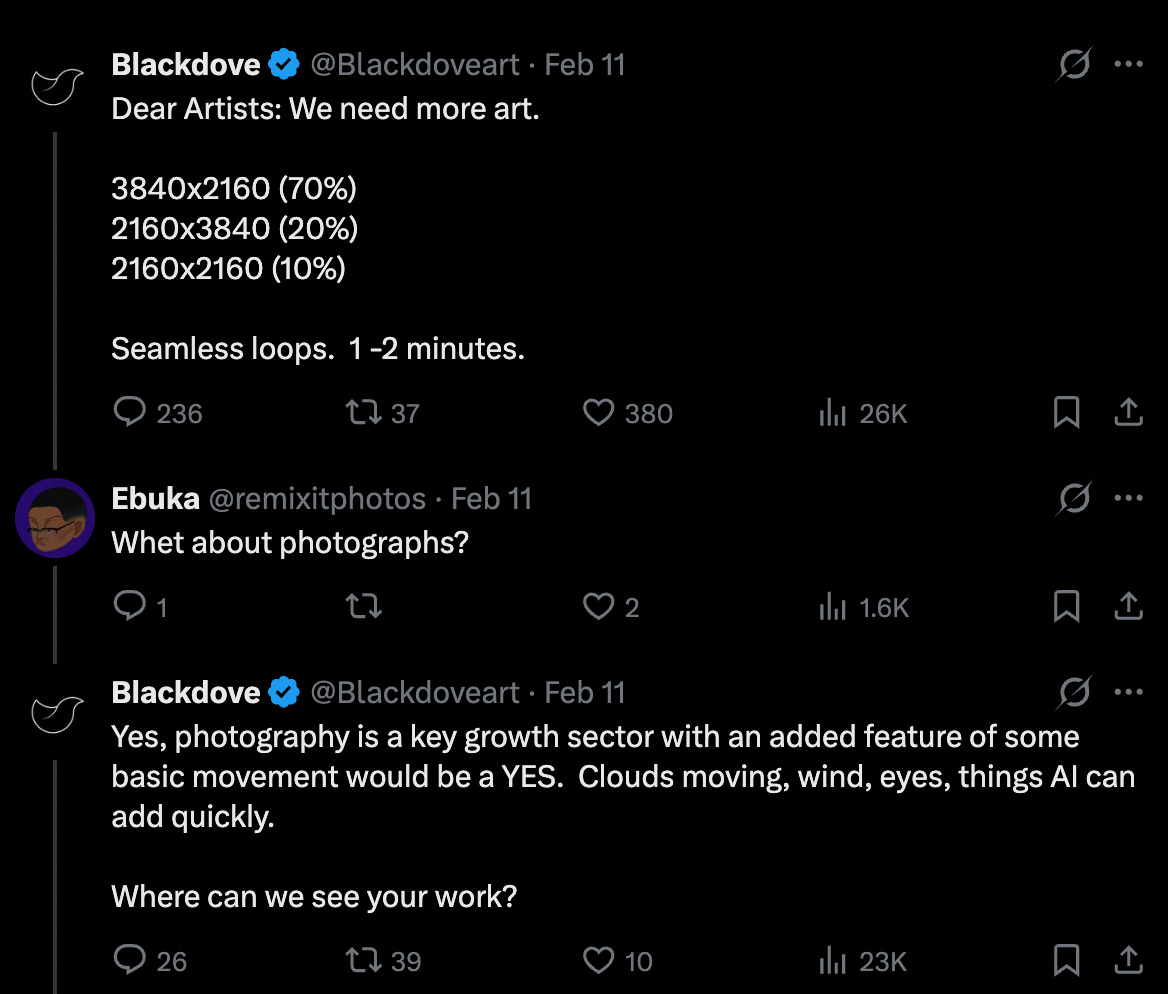

As many (many, many) artists have publicly commented, there could be little more anti-artist sentiment that telling a photographer to simply augment their work with AI so as to appease the market. It was not just a one-off event, either. They do it again elsewhere, to really tone-deaf effect, when simply commenting upon an artwork shared with them:

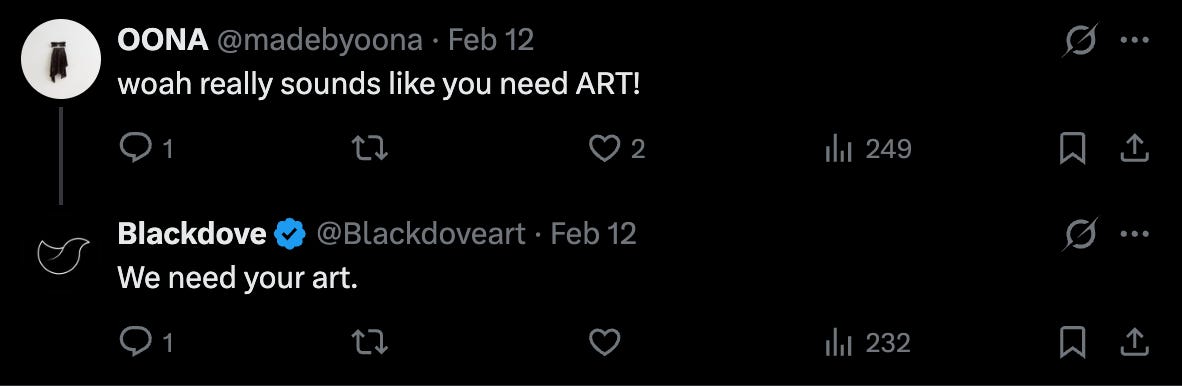

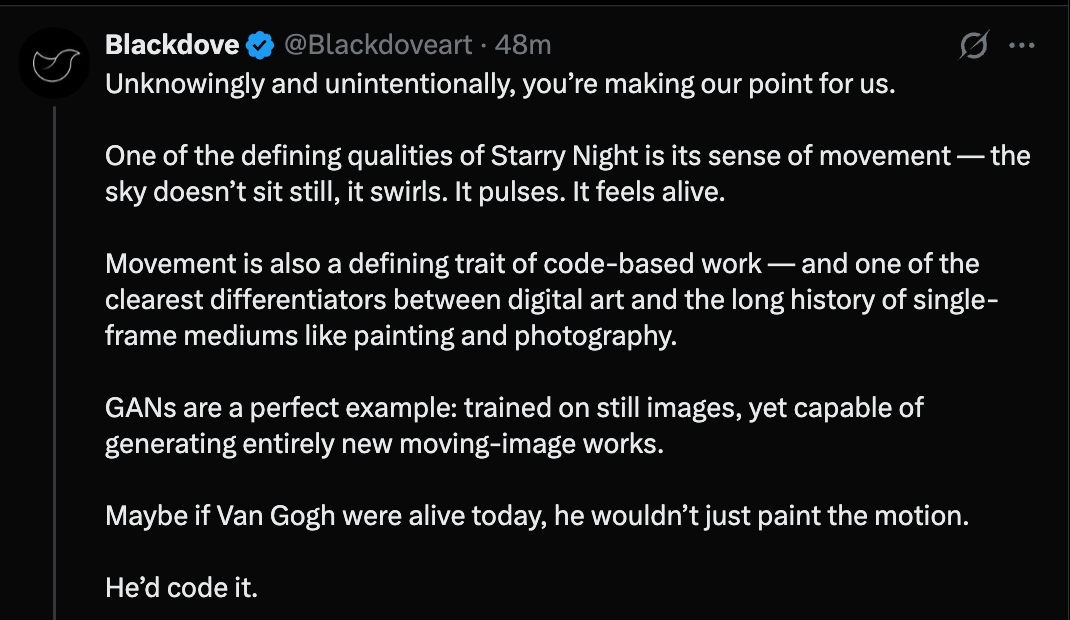

They’re, like, super adamant about this movement thing. Throughout the hundreds of social media interactions I’ve sifted through, the only thing I can really glean about Blackdove’s business model is that their clients want digital screens with pictures on them that move. And in subsequent posts, they literally try to recontextualize the entire digital art continuum by arguing that movement is the key trait of code-based art. That’s not even a paraphrase, it’s basically a direct quote.

To suggest an artist reorient their creativity so as to appeal to customers, I mean that’s so clearly at-odds with every value of this movement. They’re not even pretending to hide or diminish their devotion to market conventions, and with such douchebag nonchalance. Worse still, Blackdove responded with strange, almost Trumpian snark to artists who Tweeted back at them.

Whoever runs this social media account really seems hellbent on picking unnecessary fights with the crypto art public. Here they are bringing a collector named Yang0s to the point of actual rage. Over here, they get into a sparring match with Betty, the creator of the DeadFellaz PFP. You can find them becoming defensive, and self-aggrandizing, and dismissive, and preachy, and in the post below, they sound come across as nonsensical. I mean, for real, what the fuck does this mean?

At some point, the Blackdove team decided that much of the vitriol came from a misunderstanding of their product. They separate the goals of Foundation and Blackdove in the following post, and, yes, once again, they mention they really only care about moving images.

Apparently, their business model being dependent on animation is meant to explain their demeaning tone, though this does nothing to address their root problem, which is that they paid handsomely for that aforementioned “broader audience” and then set about systematically alienating them.

What we see consistently across their Twitter interactions is an over-reliance on “business” speak, which feels even more anachronistic in an artistic environment. Calling photography “a key growth sector,” for example. Here, instead of apologizing like a normal person, they instead “will zoom out.” “Producing consistent revenue.” “Self-market.” “Devise a test, and… executing.” “Reports of such concerns…manual onboarding,” really the financial terminology is out of hand. And this one is lifted directly from some AI-generation of a marketing conference:

“The goal of the message was to offer a relatively low lift model for photographers to gain increased exposure.”

My favorite of all, however, is this reply that simply says, “Connecting online to IRL will unlock sales.” Great insight!

Blackdove really just does not seem to understand in the slightest what works in this movement. It takes probably two weeks of sporadic observation on crypto art Twitter to see that successful influence here is often a result of unbridled positivity, frequent positive interaction, flamboyant spending, and performative celebration. This is the bread-and-butter of almost every influence or high-profile collector. It was why anyone who tweeted “GM” in 2021 and 2022 was met with rampant engagement. It’s basically the entirety of Benny Redbeard’s brand, his refrain, “This is how we build” tacked onto any smidgeon of good news. I’m not here to gripe, this is the way our collective communication has veered, and it’s what works. Blackdove’s social media team taking such a marketeering, antagonistic tone with collectors and artists, however, just demonstrates how little research they have done into crypto art, what minuscule experience they have had here previously, and how uninterested they are in actually meeting artists where they are. After Blackdove posted their anti-photography sentiment and took heat for it, the obvious, even-a- monkey-would-know-this response would be receptive to feedback and eager to learn. Pander to artists. Even if they didn’t mean it, it’s what the people here want. Spend two hours on crypto art Twitter, and that’s immediately apparent. Blackdove’s behavior only demonstrates how uninterested the company is with the community it paid handsomely to approach.

Also, as a side-note, this isn’t how businesspeople talk either. This is how people cosplaying businesspeople talk.

To finish, here’s some quick hitters that made me stop and really wonder what the hell these people are thinking:

Responses like this do again make me think an AI is handling their social media interactions. Who talks like this?

Well, what was originally a side-note to this piece very easily could have become the central topic. For some insane reason, Blackdove posted that if Vincent van Gogh were alive today, he would probably use AI to add motion-effect to Starry Night instead of going through all the trouble of becoming a master craftsman. This situation so blew-up it gained its own trending topic overhang.

3. I really only want to give Redbeard credit when I have to, but man, he really cooked here:

If the goal is to systematically alienate and infuriate the artists whose work they need to sell their products, congratulations are in order, Blackdove, you all are prodigious. If the goal was to mindlessly gain Twitter engagement, then, I suppose they’ve done that as well, though I myself am wary if all press is good press in this situation. If the goal is literally anything else, then, my goodness, someone needs to be fired or promptly chewed-out because I cannot remember any single figure or institution in crypto art so quickly, precipitously, and fundamentally losing any shred of good-will they may have grandfathered themselves into.

Separately: Just because an image is “digital” or “animated” or “code-based” does not necessarily make it artistic. One of the examples Blackdove propped-up as emblematic of the work they admire is this mildly-moving photograph, Rainbow Houses, by Franck Lefebvre. The image is pretty, but it’s pretty clearly a middling artwork and not overly original, yet Blackdove describes it like this:

What exactly about the “video and tools” make the work great? Do moving aspects alone —only the water’s surface in the above image— really increase the quality or depth of the work, or do they just make it more appealing to customers looking to hang screensavers on their walls? Is the latter really enough for something to be deemed “great”? This is yet another exaggerated example of the single greatest ill plaguing crypto art through the last five years: without any kind of accepted critical eye, the only thing that differentiates good work from bad work is whether someone is willing to pay for it. But most people —and I’d stand to reason Blackdove’s customers chief among them— lack taste. It is the gallerist’s role, in theory, to increase their ecosystem’s taste. But Blackdove is apparently only interested in pandering to the lowest common denominator, suggesting over and over again to artists that if they want to be successful, they’d set aside their meager creativity and simply do the same.

Apparently, they alone know what “the art world wants.”

If you need some final catharsis, try reading this, this, this, this, this, this, this, this, or this (which is mean).

2. What Is The AI Security Exodus Really Telling Us?

It isn’t very surprising anymore when an AI researcher quits their lab in protest. Probably the most high-profile of these departures was in May of 2024 when Ilya Sutskever, former OpenAI Chief Scientist and co-founder, left the company after it reoriented itself into a for-profit model. Rachel Metz at Time Magazine had written about how “Sutskever clashed with [OpenAI CEO Sam Altman] over how rapidly to develop AI, a technology prominent scientists have warned could harm humanity if allowed to grow without built-in constraints, for instance on misinformation.” How quaintly AI was written about just two short years ago. Metz also mentioned, “Jan Leike, another OpenAI veteran who co-led the so-called superalignment team with Sutskever, [who] also resigned. Leike’s responsibilities included exploring ways to limit the potential harm of AI.”

That departure was highly-covered by mainstream media outlets, usually so as to make some grand doomsday claim about AI coming to kill everyone (we had a limited social understanding of what “AI safety” meant). At that time, AI and ChatGPT were synonymous; there were no true competitors. When those responsible for ChatGPTs safeguarding left OpenAI, many observers for the first time conceived the potentially apocalyptic consequences of AI developed without limits.

This week, two more safety researchers left their respective AI labs, and both signaled similar consternations with how their companies were seeking to change the world. As Liv McMahon reports for the BBC, “An AI safety researcher has quit US firm Anthropic with a cryptic warning that the ‘world is in peril’. In his resignation letter shared on X, Mrinank Sharma told the firm he was leaving amid concerns about AI, bioweapons and the state of the wider world.” McMahon goes on to discuss how Sharma, “said he had ‘repeatedly seen how hard it is to truly let our values govern our actions’ - including at Anthropic which he said ‘constantly face pressures to set aside what matters most’.” It’s not difficult to parse the ambiguity out of Sharma’s warning. With rampant over-investment in AI, safety concerns are naturally being waylaid by a need to develop newer and better and more powerful models more and more rapidly, caution be damned.

In a similar vein, Allison Morrow at CNN writes about, “Zoë Hitzig, a researcher with OpenAI for the past two years, [who] broadcast her resignation Wednesday in a New York Times essay [titled ‘OpenAI is Making the Mistakes Facebook Made. I Quit.’], citing ‘deep reservations’ about OpenAI’s emerging advertising strategy. Hitzig, who warned about ChatGPT’s potential for manipulating users, said that the chatbot’s archive of user data, built on ‘medical fears, their relationship problems, their beliefs about God and the afterlife,’ presents an ethical dilemma precisely because people believed they were chatting with a program that had no ulterior motives.”

Really, the hits keep coming. Morrow continues to tell the story how, “On Tuesday, The Wall Street Journal reported that OpenAI fired one of its top safety executives after she voiced opposition to the rollout of an ‘adult mode’ that allows pornographic content on ChatGPT.” This comes after she mentions the no less than seven employees at Elon Musk’s xAI —included two co-founders and five other staff members— who resigned in the last few weeks. “While it’s not unusual for high-level talent to bounce around in an emerging industry like AI,” Morrow notes, there simply is not an abundance of high-level AI researchers that can be re-slotted around an org. chart at will. The loss of key employees would be a major blow to any AI company, which is why Mark Zuckerberg last year was offering hundreds-of-millions of dollars to top AI researchers if they left their labs and came to work for him at Meta.

As Morrow later addresses, “High-level defections have been part of the AI story since ChatGPT came on the market in late 2022. Not long after, Geoffrey Hinton, known as the ‘Godfather of AI,’ left his role at Google and began evangelizing about what he sees as existential risks AI poses, including massive economic upheaval in a world where many will ‘not be able to know what is true anymore.’” But does the consistency of defection, to use Morrow’s word, somehow undermine the reasons behind it? Have we so internalized a pessimism surrounding AI safety that the departure of almost a dozen AI researchers hardly sounds an alarm?

These are not Geoffrey Hinton’s doomsday scenarios playing out in real time. These are things more akin to our collective realization in the 2010’s that social media had been too widely deployed before we understood its effect on our psyches. Just look back at the title of Heitzig’s New York Times essay: “OpenAI is Making the Mistakes Facebook Made. I Quit.” I don’t mean to say that the concerns being mentioned are somehow less scintillating than Skynet going live, but it’s apocalyptia of a different flavor that these AI researchers seem to be evoking. Ambient manipulation, social misinformation, unpredictable job loss. It’s not an AI system taking control of all the world’s nukes and letting ‘em rip. It’s something slower, deeper-seeded, and multi-sectored.

Mrinank Sharma, the employee who left Anthropic, did so because, in his own words, of “a whole series of interconnected crises unfolding in this very moment.” AI acting as an accelerator of strife feels much more believable and nearby than it being singularly responsible for that strife, in the same way that Facebook —when it was spreading political disinformation— didn’t create problems of social division but massively amplified them, managing to pour concrete around a problem before most had even noticed it was happening.

I’m no AI doomer, but I’m also not blind to the third and fourth-order effects of these models being unleashed with broader reach, higher power, and fewer guardrails. What these employee defections tell us is not that we are headed for a nuclear apocalypse, but that AI is changing the world under our feet so evenly that we barely feel a quake. Sharma left Anthropic to “pursue a poetry degree and writing.” As he said in a reply, “I’ll be moving back to the UK and letting myself become invisible for a period of time." He seems to be saying, What else can I do, in the calm before the storm, but enjoy what time I have left?

3. Ring’s Super Bowl Commercial Was so Bad, it Eroded All Trust in the Company

If you missed this Psy-Op gone wrong, take a quick look before we go ahead.

Oh, Ring. Sweet, sweet Ring. What specifically was it that led to such a backlash? Was it the way they made a suburban neighborhood look like it was about to be bombed by drones?

Was it the clunky wording of “Search Party from Ring uses AI to help families find lost dogs?”

Was is that the very name “Search Party” evokes images of law enforcement with bloodhounds and German Shepherds hunting criminals in the forest?

Or maybe it was their admission that the software in question had already been uploaded to every existing Ring camera and was “available for free, right now.” You know, because tech companies love giving new products away for free without any ulterior motives.

Whatever the reason, Ring’s Super Bowl commercial caused real hysteria not only amongst their users, but throughout a U.S. citizenry already quite concerned about AI surveillance. As Kevin Collier reported for NBCNews, “The social media response [to Ring’s commercial] was swift. One person called it ‘the quiet rollout of a national surveillance regime,’ while another joked ‘surveillance state but make it adorable.’ One video on TikTok with more than 3 million views called the commercial terrifying.’”

Ring, owned by Amazon, has learned very quickly that hostility to AI is not some passing internet fad or niche belief but a widespread sentiment that crosses political divides. Trevor Hughes’ article for USAToday sums up the overall response pretty succinctly in just his title, “Why are people disconnecting or destroying their Ring cameras?” He goes on to quote Jay Stanley, a senior policy analyst with the ACLU, who said “‘I think (the commercial) surprised a lot of Americans by revealing just how powerful surveillance networks backed by AI have become…That power may be applied to puppies today, but where else might it be applied? Searches for people wearing t-shirts with certain political messages on them?’” Speaking for myself, I thought we were probably a few steps away from a future like this. But if Ring is promoting a product that can identify between dogs by size, breed, coloration, and other minute features, surely they can identify me from a distance.

The rest of Hughes’ article is a nice encapsulation of all the ways AI-aided surveillance has already been used by law enforcement to various ends. “Surveillance systems like Ring and Flock [a company that makes AI-powered traffic cameras] are popular among police departments, who say they provide a powerful new tool to track stolen cars and find criminal suspects,” he writes before noting how “media reports from around the country have shown that departments that access data from Flock, for instance, have at least occasionally shared it with federal immigration officers despite local laws against it.” So already we kiss any high-minded promises of privacy goodbye. Apply a little governmental pressure, and there’s no user agreement that can’t be broken.

As per Jennifer Pattison Toohey at the Verge, “Following intense backlash to its partnership with Flock Safety, a surveillance technology company that works with law enforcement agencies, Ring has announced it is canceling [a planned] integration.” Ring cited as reason for the cancellation that “the planned Flock Safety integration would require significantly more time and resources than anticipated,” though the timing of their announcement seems clearly like a result of public outrage. And that outrage was coming from laypeople and experts alike. As Pattison Toohey wrote in a separate Verge article published two days prior, “Privacy expert Chris Gilliard told 404 Media that the ad was ‘a clumsy attempt by Ring to put a cuddly face on a rather dystopian reality: widespread networked surveillance by a company that has cozy relationships with law enforcement and other equally invasive surveillance companies.’”

What’s abundantly clear is that fears about AI surveillance are neither passing nor niche. And Ring’s ad may have only given these fears more solid shape. Trevor Hughes continues in his article by quoting "The ACLU’s [Jay Stanley, who said] the public doesn’t fully appreciate just how effective centralized video databases have become, especially when users are able to easily search for key words or descriptions ‒ a far cry from the days when police had to manually view each clip.”

This feels very reminiscent of when ChatGPT was first released in late-2022/early-2023, and people en masse realized just how advanced this technology already was. That early-version ChatGPT did not feel like an early prototype, it felt like a fully-fledged life-changing product. Ring has inadvertently done a similar thing; their ad was meant to be a celebration of AI surveillance’s second-order benefits —the company boasts that Ring’s Search Party has returned a lost dog every day— but instead only served to remind an already skittish public of many other second-order dangers. How else can one interpret this commercial besides “Wow, everything is being watched”? It’s an especially ill-advised to deploy into an American public that famously decries any attempt to subjugate its basic liberties.

This Ring “scandal” also links to another very high-profile bit of oddness happening in the United States: the disappearance and alleged kidnapping of Nancy Guthrie, mother of NBC News anchor, Savannah Guthrie. The Guthrie saga has been slowly unfolding over a period of weeks and no suspects have yet been named —nor has Guthrie’s fate been determined. Last week, after accessing Guthrie’s Google Nest doorbell cam (a Ring competitor), the FBI released a photo of a masked man who had arrived at Guthrie’s house moments before the suspected kidnapping. The masked man appears, covered the camera, vanishes into the house, and is never seen again. Many questioned how the FBI could have access to this footage, as Guthrie did not currently have the necessary Nest subscription required to store information for user playback.

In his NBC article, Collier quotes, “Matthew Guariglia, a senior policy analyst at the Electronic Frontier Foundation, a nonprofit that advocates for digital rights, [who said] that while the Guthrie case was not explicit evidence that tech companies store video footage even when people don’t have an active subscription, he found it plausible. ‘Google would not be the first company whose devices collected and stored data, even when the people who owned those devices thought they were no longer doing so.’”

So all that data, round-the-clock surveillance of many millions of front yards, stoops, porches, and front doors around the country, uploaded automatically to some cloud and accessible by default to the parent company; and, of course, whoever pressures them to hand it over. The very premise may make you and I quake in your boots, though naturally, many Americans have heretofore ignored the privacy concerns for the sake of security and convenience. It seems crazy that a single misplaced, poorly-timed advertisement could undo all that trust, fundamentally altering public sentiment towards a product already hooked-into 16% of American homes.

And yet, some Super Bowl ads really are that bad.

DeCC0 of the Week

Our DeCC0 of the week is one beautiful character, our friend, Amesgarda:

Art in the Wild

Quote of the Week

“Man is so made that when anything fires his soul, impossibilities vanish.”